There are a lot of open source Machine Learning (ML) tools out there. Choosing one is a major decision that's usually not easy to change. To start, let's take a wisdom of the crowds approach to see what is popular and growing.

If you are basing your Machine Learning project off of an open source project, ideally it should not only fits your technical requirements, but also come with a large and growing community that can support new needs, answer questions, and quickly resolve issues. Github has become the de facto code repository for open source, and data on public repositories there is freely available so we will look there. This data is not perfect, but we can use Github metrics as a proxy for which projects have the largest and most active communities.

The List

I put together a list of machine learning library, framework, and toolkits, starting with the popular ones referenced the most often in Machine Learning searches. Here is my top 25 list:

| Rank | Repo | Website | Corp Sponsor | Inactive |

|---|---|---|---|---|

| 1 | Tensorflow | tensorflow.org | ||

| 2 | Keras | keras.io | ||

| 3 | sci-kit learn | scikit-learn.org | ||

| 4 | OpenCV | opencv.org | ||

| 5 | Caffee | caffe.berkeleyvision.org | Berkeley | |

| 6 | PyTorch | pytorch.org | ||

| 7 | CNTK | microsoft.com/en-us/cognitive-toolkit | Microsoft | |

| 8 | MXNet | mxnet.incubator.apache.org | Apache | |

| 9 | Deeplearning4j | deeplearning4j.org | skymind | |

| 10 | Theano | deeplearning.net/software/theano | UofM | x |

| 11 | Caffe2 | caffe2.ai | ||

| 12 | Torch | torch.ch | None | |

| 13 | Darknet | pjreddie.com/darknet | ||

| 14 | DeepSpeech | research.mozilla.org/machine-learning | Mozilla | |

| 15 | Leaf | autumnai.github.io/leaf | x | |

| 16 | DSSTNE | Amazon | ||

| 17 | Chainer | chainer.org | Preferred Networks | |

| 18 | Kaldi | kaldi-asr.org | ||

| 19 | Lasagne | lasagne.readthedocs.io | ||

| 20 | Accord.NET | accord-framework.net | ||

| 21 | Pocket Sphinx | cmusphinx.github.io | CMU | |

| 22 | Singa | singa.apache.org | NUS | |

| 23 | Brainstorm | people.idsia.ch/~juergen/brainstorm.html | x | |

| 24 | Blocks | blocks.readthedocs.io | ||

| 25 | Core ML | developer.apple.com/machine-learning | Apple |

Some of this is mixing apples and oranges. For example, Keras is really an interface can run on top of TensorFlow, CNTK, or Theano. Other libraries like Pocket Sphinx are only for speech recognition while sci-kit learn is a more general framework that can be used in many applications. OpenCV does a lot of computer vision tasks, only some of which most would consider to be Machine Learning (and many of the OpenCV ML-based API's libraries are actually in this repo, not the main one).

I also included Apple's CoreML - the core project is not open source, but it does have some developer tools that are.

Some of these are no longer actively maintained by the original project sponsors. That does not mean they are dead, but as you'll see later these are generally in decline.

Basic Github Stats

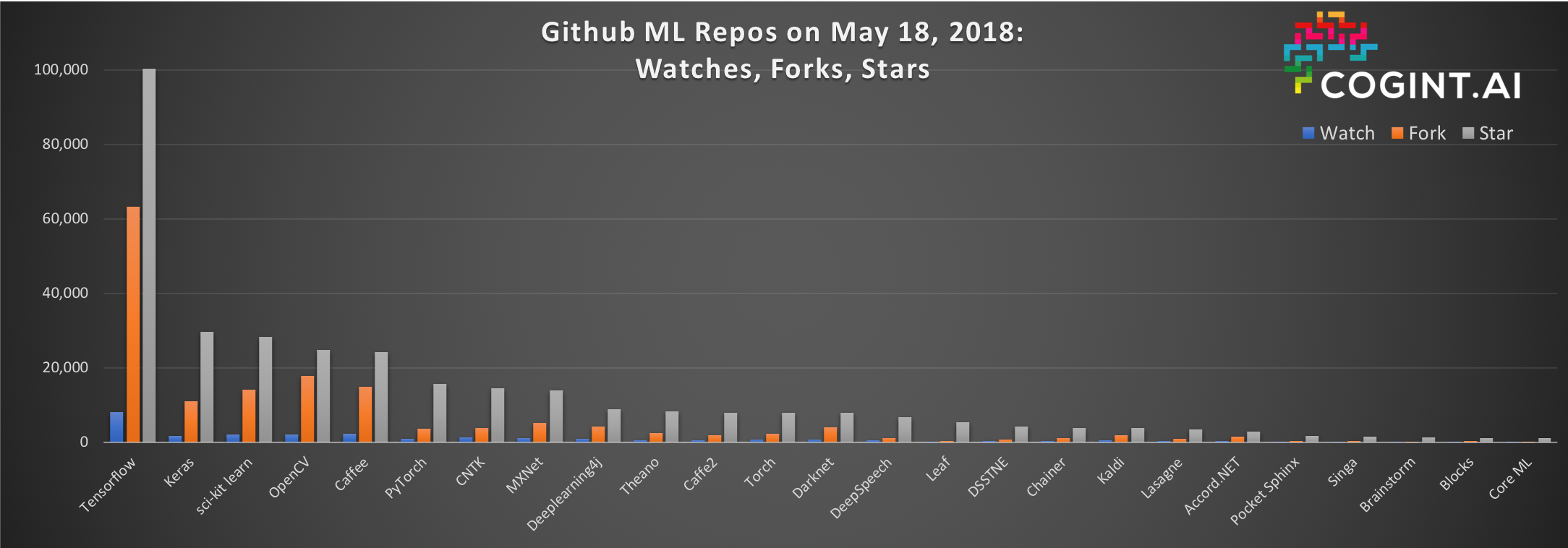

Let's just use the above table for reference and dive into how each ranks on Github. Github shares a lot of stats publicly on each repo. For those of you who aren't developers, here are the stats I pulled:

- Watch - number of github users watching the repo for changes

- Forks - number of github users who have a personal copy of the repo

- Stars - number of github users who favorited the repo

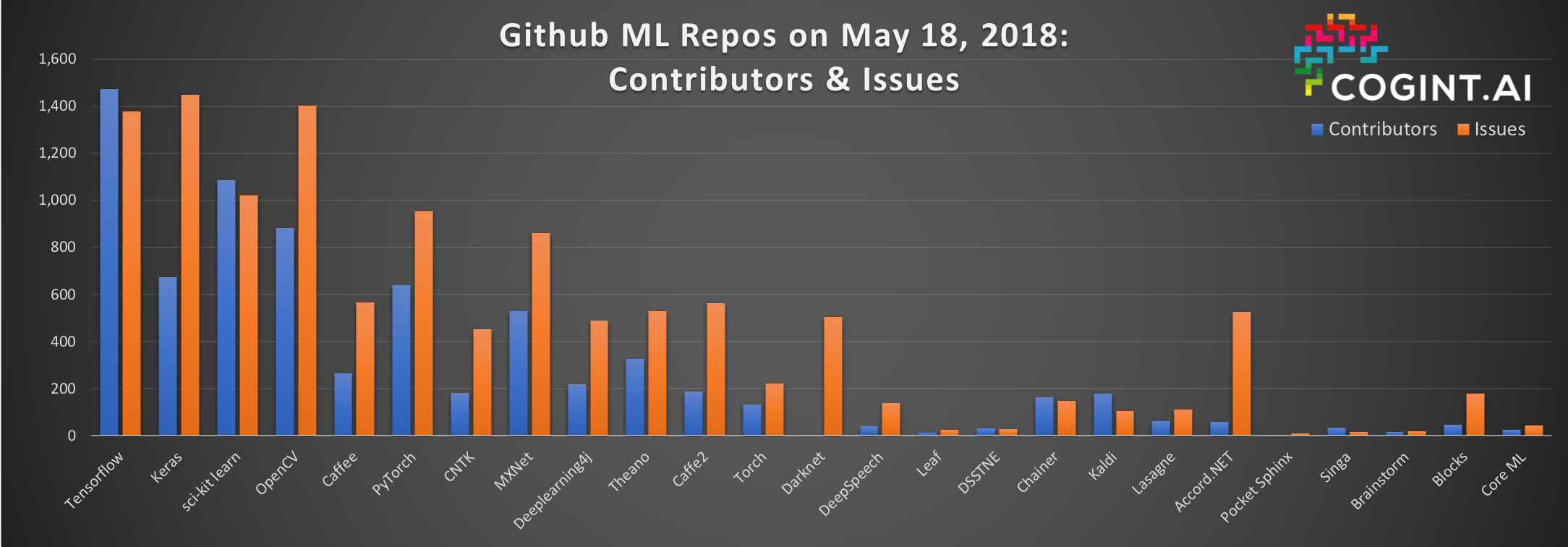

- Contributors - number of github users who have added some code to the project

- Issues - total number of github issues; these are usually used to track bugs, feature requests, and questions

Before we look at these, first some caveats. Every project handles their issues differently, with some using issues as a general support forum while others push their developers to mailing lists or message boards for this activity. Also, issues are a measure of activity, but a project with a lot of problems that aren't being addressed is not necessarily a good one, so make sure you click into these repos before you draw an deep conclusions on any one figure.

Other than that, these should be fair comparison metrics between the repos chosen. Of course, repos like sci-kit learn that have been around for a long time will have had more time (7 year) to accumulate stars, contributors, etc. than relatively newer ones like TensorFlow (3 years).

To make this easy to visualize across scales, I split this data across 2 charts:

TensorFlow wins, by a huge margin

TensorFlow is the definitive winner here across all but one metric, far surpassing the rest of the field. The only metric where TensorFlow is not dominent is in issues, but as mentioned above this is metric has a lot of caveats.

Ok, TensorFlow wins, but is Google gaming these metrics by encouraging newbies to star its repos and do simple forks, but never contribute anything? Let look at a more meaningful metric: active contributors over time - the actual number of people regularly contributing code.

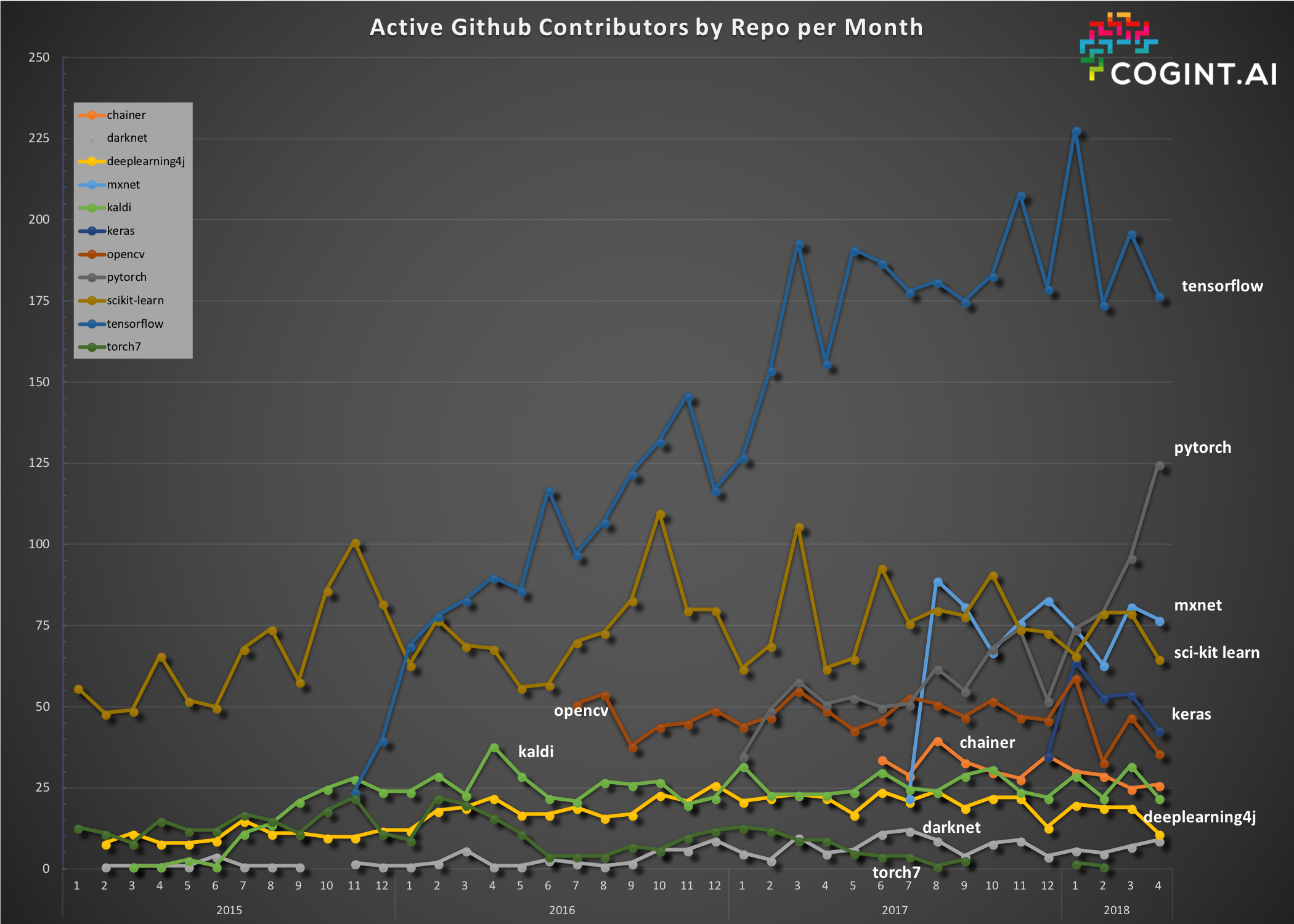

Active Contributors

A better way of comparing communities on Github is to look for actual code contributors, by month, over time. This will more clearly show which repos have the most active developers and which way they are trending. I have used a BigQuery methodology to compute something similar over at webrtcHacks a few times and I am doing something similar here. Check out my Data Nerding with WebRTC on GitHub post over there if you are curious on how to run these queries.

Here is what that graph looks like since 2015:

Note I removed some less-active repos to help improve the visualization.

Who wins here? Clearly Tensorflow again - it has both the magnitude and and growing contributors, though that seems to be leveling off. Pytorch is also doing very well with a lot of recent growth. The others all appear to have flat growth or are in slight decline.

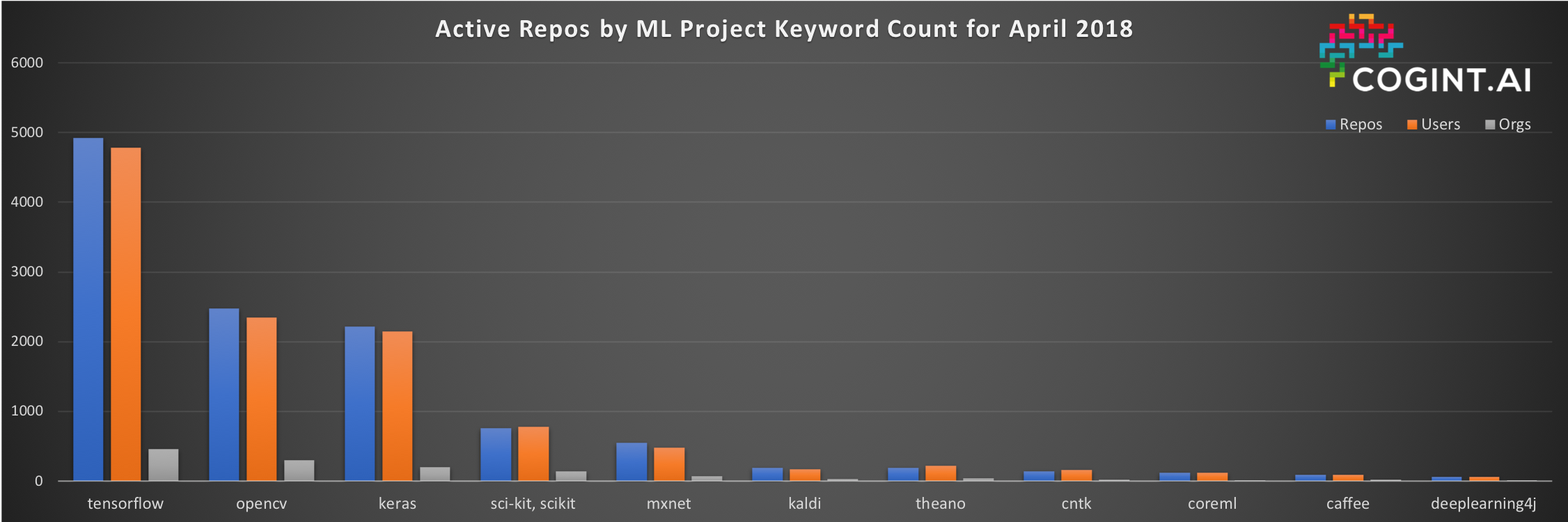

Active Repo Counts

There is one more metric we can look at - how many active repos are that using each of these frameworks? It is possible that repo has a lot of interest, but that no one is actively using it inside their own projects. A search for the number of repos referencing the ML tool provides another indicator. This query is a bit tricky as it involves using a unique search term - i.e. "tensorflow" or "kaldi" in the repo name or push/pull event. This query is also expensive inside BigQuery so I only included a few of the libraries for reference (for now). Again, the data produced isn't perfect, but it is certainly a directional indicator.

Like the chart above, see the webrtcHacks post again for additional background on this methodology. Remember, that this only looks at public repos.

Nothing new here either - Tensorflow is way ahead with OpenCV and Keras coming in 2nd and 3rd.

Conclusion

TensorFlow is the undisputed winner here on nearly every metric. You will need to decide if TensorFlow is right for your project, but if you choose it you can at least rest assured that many others are making the same choice.

Give me the data

If you want to see the raw results in a sheet or do your own cuts, you can see everything embedded in this page: https://cogint.ai/github-ml-repo-data/. As long as we do not exceed Google Sheet's external HTML import limit, the first tab should auto-update. The other tabs are frozen in time since May 22, 2018.

Questions or want more? Use the comments below or contact me at chad at cwh.consulting.

Chad Hart is an analyst and consultant with cwh.consulting, a product management, marketing, and strategy advisory helping to advance the communications industry. He is currently working on a AI in RTC report.

Image credits

Hero image: IMG_5217 by Flick user Megan Elice Meadows under CC BY-SA 2.0

Comments